Seen in today’s broader industry context, this exercise was not just an isolated event. With signals such as Claude Mythos and Project Glasswing, it is becoming increasingly clear that the biggest shift brought by frontier AI models is not whether they can find vulnerabilities, but that hidden attack surfaces inside complex systems can now be seen with unprecedented speed and persistence.

That is why discussions about enterprise security can no longer stop at traditional scanners, periodic penetration tests, and reactive response. We are entering a new reality: the speed at which attack surfaces are exposed is accelerating because of AI.

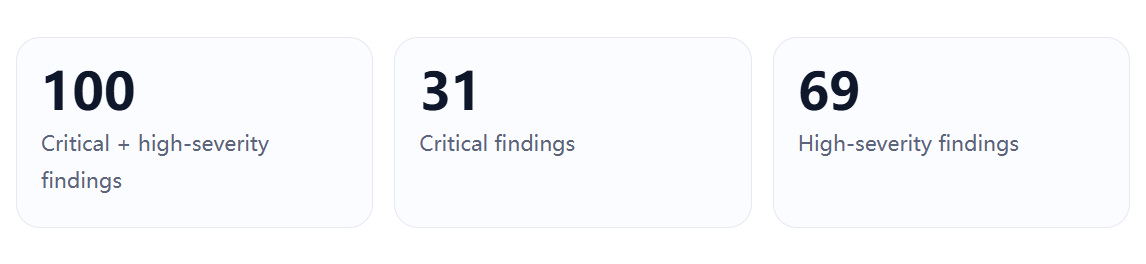

In a recent real-world adversarial exercise, we assessed a large and highly complex production-grade environment. The target had all the characteristics typical of modern large-scale systems: extensive asset sprawl, numerous subsystems, dense API layers, complicated identity flows, and frequent cross-domain and cross-system interactions.

Those numbers are striking on their own, but the more important point is what they reveal structurally. This was not a case of repeatedly hitting the same class of bug. The issues were distributed across multiple attack layers: unauthenticated access, object-level authorization failures, function-level authorization failures, exposed credentials and key material, API surface enumeration, session-boundary confusion, cross-origin security weaknesses, and edge-asset or infrastructure drift.

This was not simply “a lot of bugs.” It was a multi-layer attack surface becoming visible all at once.

One major category involved unauthenticated access and weak authorization controls. On the surface, these issues may look simple: an API reachable without login, an object ID that can be enumerated, a business function that accepts requests without validating the caller properly. But at scale, such findings usually indicate something deeper: the authentication model, the authorization model, and the object boundary model are no longer aligned.

When a system simultaneously exhibits unauthorized reads, cross-object access, and unauthorized writes, the problem is rarely a single missed check. More often, it reflects a widening gap between how permissions were designed and how they are actually enforced across the system.

Another high-risk class centered on secrets, keys, and embedded credentials. These are often underestimated because they can look like mere “configuration exposure.” In practice, they are often immediately usable: to forge requests, decrypt traffic, inherit access into adjacent systems, or turn what would otherwise be an edge-case issue into a reliable attack path.

API exposure and interface enumeration formed another clear pattern. The danger was not limited to any one endpoint. Rather, the interface layer as a whole was discoverable, understandable, and expandable. Predictable identifiers, sequential object references, internal API descriptions, backend paths, and parallel interfaces all create a capability map for an attacker. Once an attacker reaches that layer, testing no longer remains point-by-point. It becomes system-level enumeration and relationship modeling.

More subtle—but often more dangerous—were identity-boundary and session-boundary flaws. In complex systems, “who is making the request” and “which object the request is trying to access” are not always derived from the same place. Cookies, headers, body fields, temporary tokens, guest-state identifiers, and session artifacts may all compete to define identity. When those interpretations diverge, the result is not a simple missing-authentication issue. It is an identity-resolution failure, and those tend to be both harder to detect and more impactful once exploited.

We also observed a class of issues outside core business logic, in the operational and boundary layers: cross-origin configuration problems, file access control weaknesses, stale subdomain bindings, infrastructure drift, and mismanaged third-party resource relationships. These are precisely the kinds of problems that development teams often consider peripheral, yet attackers routinely use them as entry points.

The most important signal was not simply that 100 critical and high-severity issues existed. It was that 31 of them were critical, meaning critical issues made up 31% of the total severe finding set. That ratio strongly suggests the environment was not merely generating edge-case noise. A meaningful portion of what was exposed had direct exploit value, high impact, or strong attack-chain potential.

Just as important, the findings were not confined to a single technical layer. They spanned the access layer, the identity layer, the object layer, the secret and key material layer, the browser and cross-origin layer, and the edge-asset layer. That kind of distribution implies lateral extensibility: once an adversary gets traction in one layer, there are natural opportunities to pivot into others.

And that leads to the third structural signal: chaining potential. An exposed API surface helps an attacker understand system capabilities. Leaked credentials raise the success rate of follow-on actions. Identity-boundary weaknesses enable cross-object access. Configuration flaws open browser or edge-assisted exploitation paths. Asset drift provides alternative entry points. The most dangerous issue is often not the “worst” individual bug, but the combination that turns several medium-signal weaknesses into one reliable attack path.

A common reaction to results like this is: how can a major, mature, highly engineered system still expose so many serious weaknesses after years of development and multiple rounds of security testing?

The answer is that large systems are never truly static. They evolve through architecture changes, parallel engineering teams, legacy modules, new API layers, external integrations, support platforms, customer-service systems, gateways, file services, and operational tooling. Security testing may have covered a snapshot in time, but the system continues changing after the test ends.

Traditional penetration testing also has natural limits. It operates under time-bounded scopes, fixed project windows, and practical delivery constraints. That makes it valuable—but it is still closer to high-quality sampling than structural exhaustion. Parallel interfaces, low-traffic endpoints, internal description layers, identity mismatches across systems, and long-tail boundary flaws are exactly the categories most likely to survive that model.

In many mature environments, the real issue is not that “nobody ever looked.” It is that complexity itself continuously manufactures new security debt. A single interface may be safe alone but become dangerous when paired with another object path. A temporary token may be harmless until combined with a second identity flow. A front-end configuration may look operational until it becomes an effective credential. A stale subdomain may look irrelevant until someone claims it.

AI does not create these vulnerabilities. What it changes is the economics of finding them.

Historically, many high-risk issues remained undiscovered not because they were impossibly sophisticated, but because uncovering them required too many low-value actions sustained over too much time: enumerating APIs, comparing responses, organizing object identifiers, correlating front-end and back-end fields, extracting clues from static assets, and linking repeated identity signals across systems.

This is exactly where AI becomes transformative. AI can retain long context, aggregate scattered outputs, generate next-step hypotheses, compare subtle response differences, and keep extending a line of inquiry without losing the thread. In other words, it improves not just single-request intelligence, but whole-system coverage across long attack chains.

That means some problems that once required a highly skilled researcher, a lot of patience, and substantial time are now moving toward a world where they can be discovered more systematically, more consistently, and at higher frequency.

The real shift is not that AI suddenly makes every attack smarter. It is that AI makes complex discovery workflows scalable.

And that cuts both ways. If defenders can use AI-assisted red teaming to find these issues more efficiently, attackers can do the same. Many previously “hidden” risks were never truly hidden; they were just expensive to connect. AI lowers that connection cost.

In that context, AI-driven red teaming is no longer just a nice-to-have. It is becoming a practical requirement for defending complex systems.

Modern systems are expanding faster than purely manual security coverage can keep up with. Interfaces, identities, subsystems, and third-party dependencies are all multiplying. If the attack surface changes continuously but testing only happens periodically, gaps are inevitable.

Many of the most dangerous weaknesses in modern environments are also deeply contextual. Object-level authorization failures, function-level authorization failures, identity mismatches, configuration drift, and cross-system credential inheritance are not purely rule-based problems. They are long-context problems. AI red teaming is especially well-suited to that kind of work because it can preserve context across long chains, form hypotheses, validate them, and adapt based on outcomes.

Most importantly, organizations now need to see their own real attack surface before attackers do. The central question is no longer whether a company has done “some security work.” The real question is whether it has used tools strong enough to inspect itself at the same pace the threat landscape is accelerating.

The value of this exercise was not merely that it exposed 100 critical and high-severity issues. It revealed the structure of risk inside a complex system under real adversarial conditions.

Finally, the work did not end at discovery. Based on the validated findings, impact analysis, and remediation guidance produced during the exercise, the exposed issues were taken through remediation and brought to closure. That matters because security value does not come from reports alone. It comes from completing the full loop: discovery, validation, and remediation.

That is why this exercise matters. It is not just a vulnerability story. It is a field sample of what security looks like in the age of AI.

The question is no longer whether enterprises should use AI for security. The question is whether they are already using AI to see themselves before attackers do.