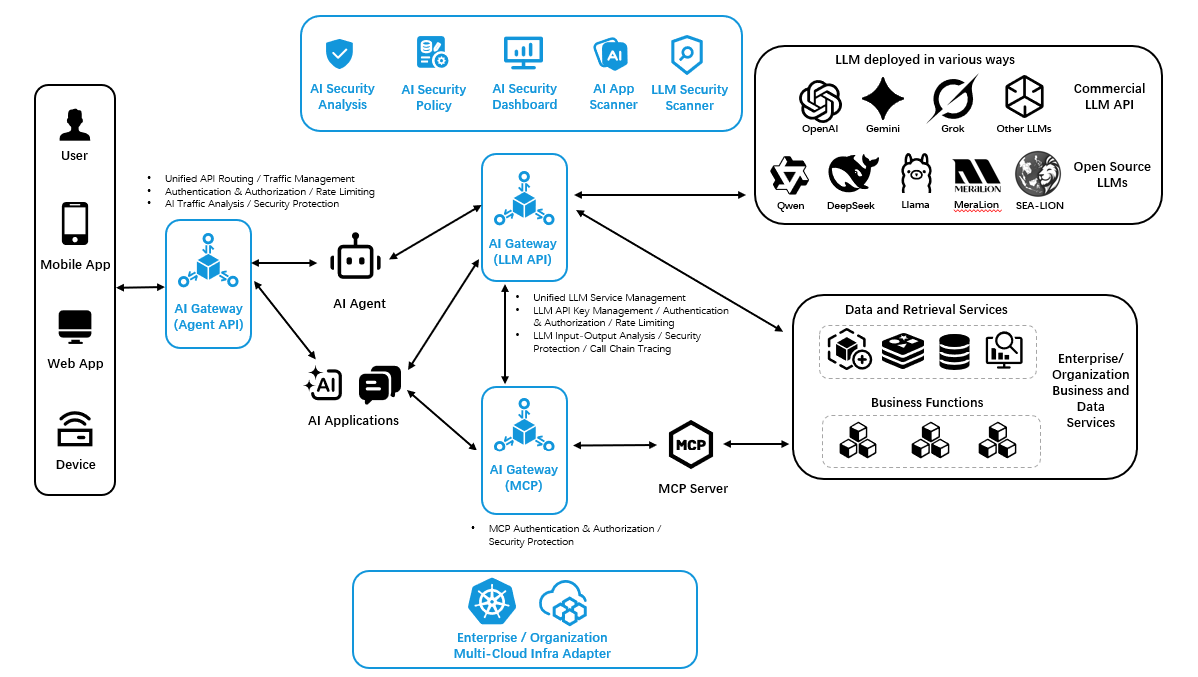

AI Defender is a runtime AI security platform that protects LLM applications and agentic workflows through real-time guardrails, policy enforcement, and threat detection.

Request Demo

AI systems introduce new risks at runtime, including prompt injection, jailbreaks, data exposure, and unsafe tool use. Organisations need security controls built for live AI interactions.

AI risks emerge at runtime

Many AI threats only appear when prompts, responses, and agent actions are already in motion.

External controls are no longer enough.

Security must move closer to the AI interaction layer, not remain outside the system.

Visibility and policy enforcement are critical

Teams need live monitoring and enforceable guardrails to keep AI usage secure and accountable.

.svg)

AI Defender embeds security directly into live AI interactions, helping organisations detect risks, enforce guardrails, and maintain control as prompts, responses, and agent actions happen.

Embedded in the AI flow

Protection happens within runtime interactions, not only at the system edge.

Real-time risk detection

Identify unsafe prompts, outputs, and behaviors as they occur.

Inline policy enforcement

Block, redact, or flag risky activity before it causes harm.

.png)

AI Defender sits between users, applications, and AI models. Using an LLM-as-a-Judge approach, it evaluates prompts and responses in real time, applies guardrails, and gives teams visibility into AI risks and usage.

Inspect every interaction

Review prompts and responses as they happen.

Apply runtime guardrails

Block, flag, or redact unsafe activity instantly.

Surface actionable visibility

Show risks, alerts, and usage in one dashboard.